For years, the artificial intelligence boom crowned GPUs as the undisputed kings of computing. Now, in a twist few expected, the company that led that revolution says the future may depend just as much on a far older technology: the CPU.

At the center of this shift is NVIDIA CEO Jensen Huang, who built his empire on specialized graphics processors but is now openly embracing the very chip long dominated by rivals like Intel and Advanced Micro Devices.

“We love CPUs as well as GPUs,” Huang told analysts during the company’s latest earnings call—an unusually direct endorsement of a technology Nvidia once positioned as secondary in the AI era.

From GPU Domination to a More Balanced AI World

Over the past decade, Nvidia reshaped computing by proving that GPUs—designed to perform thousands of parallel calculations—were ideal for training artificial intelligence models. Huang often described the transformation bluntly: computing had flipped from 90% CPU-driven workloads to GPU-heavy processing.

But AI is evolving.

The industry is moving from training massive models to deploying them in real-world environments—powering AI agents that write code, analyze documents, and automate workflows continuously rather than crunching enormous datasets once.

That shift changes the hardware equation.

Inside Nvidia’s New Compute Vision

CPUs, long considered the “generalists” of computing, excel at handling diverse, sequential tasks—exactly the kind of workloads AI agents increasingly require.

“Agent-based computing is happening more and more, and sometimes primarily, on the CPU,” said Ben Bajarin of Creative Strategies.

In other words, GPUs may still train the brain—but CPUs are becoming essential to running it.

Why Deployment, Not Training, Is Driving Demand

Traditional AI development relied on brute-force math—perfect for GPUs performing simultaneous calculations across vast datasets. But once models are trained, they must operate efficiently, respond to queries, and manage streams of data in real time.

That operational phase leans heavily on CPUs’ flexibility and memory-handling strengths.

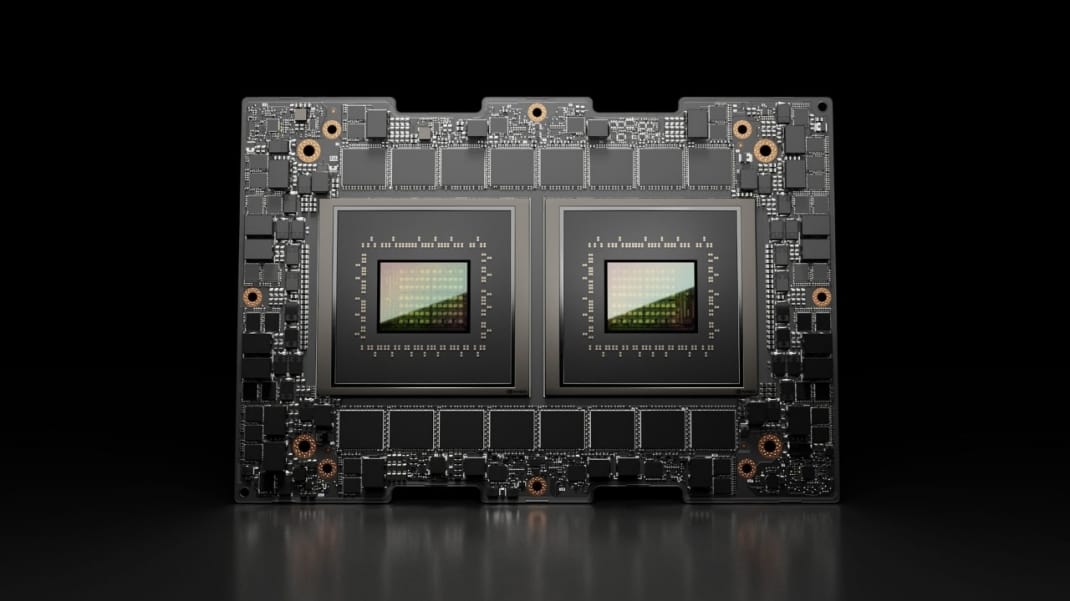

Nvidia’s flagship AI servers already reflect this hybrid reality. Systems that once heavily favored GPUs now integrate large numbers of CPUs alongside them, and analysts expect that balance to tighten further—possibly reaching parity for certain “agentic” workloads.

A Direct Challenge to the Old Guard

Nvidia isn’t just acknowledging CPUs—it’s trying to reinvent them.

Recent partnerships underscore that ambition. Meta Platforms has agreed to deploy Nvidia’s data-center CPUs independently, a notable change from earlier architectures where Nvidia chips primarily supported GPU-heavy configurations.

The move doesn’t replace existing suppliers—Meta continues working with AMD—but it signals a broader diversification of compute infrastructure as AI scales globally.

Huang argues Nvidia’s design philosophy differs fundamentally from competitors. Rather than breaking processors into smaller chiplets, Nvidia is optimizing CPUs for high-throughput data processing, reflecting AI’s core need: moving and interpreting massive datasets quickly.

Redefining What “Core Computing” Means

Industry observers say Nvidia is attempting something larger than product expansion—it’s trying to redefine the hierarchy of modern computing.

Dave Altavilla of HotTech Vision and Analysis describes Nvidia’s strategy as an effort to show that the CPU is no longer the default foundation of computing, but rather one architectural option in a heterogeneous system where CPUs, GPUs, and specialized accelerators coexist.

This modular view reflects how AI data centers are increasingly built: not around a single dominant chip, but around clusters of purpose-driven processors working in tandem.

The AI Agent Boom Could Rewrite Chip Economics

The rise of autonomous AI agents—software capable of performing ongoing tasks without human prompting—is expected to further rebalance demand.

Unlike model training, which happens intermittently, agent-driven workloads run constantly. That creates sustained need for CPUs that can orchestrate logic, memory access, and decision-making layers while GPUs handle bursts of heavy computation.

If this pattern holds, Nvidia’s opportunity could expand dramatically—from selling accelerators for AI labs to supplying the foundational processors of everyday AI infrastructure.

Huang has gone so far as to suggest Nvidia could one day rank among the world’s largest CPU vendors—a striking statement from a company once defined entirely by graphics chips.

A Full-Circle Moment for Silicon Valley

The irony is hard to miss:

The technology that AI seemed poised to sideline is becoming critical again—only this time redesigned for an intelligence-driven world.

Rather than replacing CPUs, AI may be giving them a second life.

And Nvidia, the company that disrupted computing with GPUs, now wants to lead that comeback too.